Android Face Tracking: Challenges, Benefits, Tips to Choose an SDK

Face tracking is a technology-driven capability to empower your apps with never-seen-before immersive experiences. Desktop-based digital products have a stable facial tracking performance while mobile-centric solutions are now struggling with high-performing AR-powered capabilities.

This still becomes a challenge as the mobile-based environment provides additional factors to handle like a limited device CPU, built-in memory allocation, and the so-called "constrained devices". If this resonates with your business or technical needs, our post will guide you through the Android face tracking technology by featuring the core tips and tricks on implementing ML-enabled capabilities for mobile apps.

Also, we'll share our tried'n'tested tips on choosing the best facial tracking software and how Banuba's Android facial tracking SDK helps avoid spending fortunes on pricey app development and long-term project initiatives.

Face Recognition vs Face Tracking: What's the Difference

Before we deep delve into the aspects of face tracking on mobile, one needs to understand the difference between face detection, face tracking, and face recognition.

Non-techies often confuse these terms whereas techies may use them interchangeably. However, the functionality of the solutions built with these technologies and their use cases are totally different.

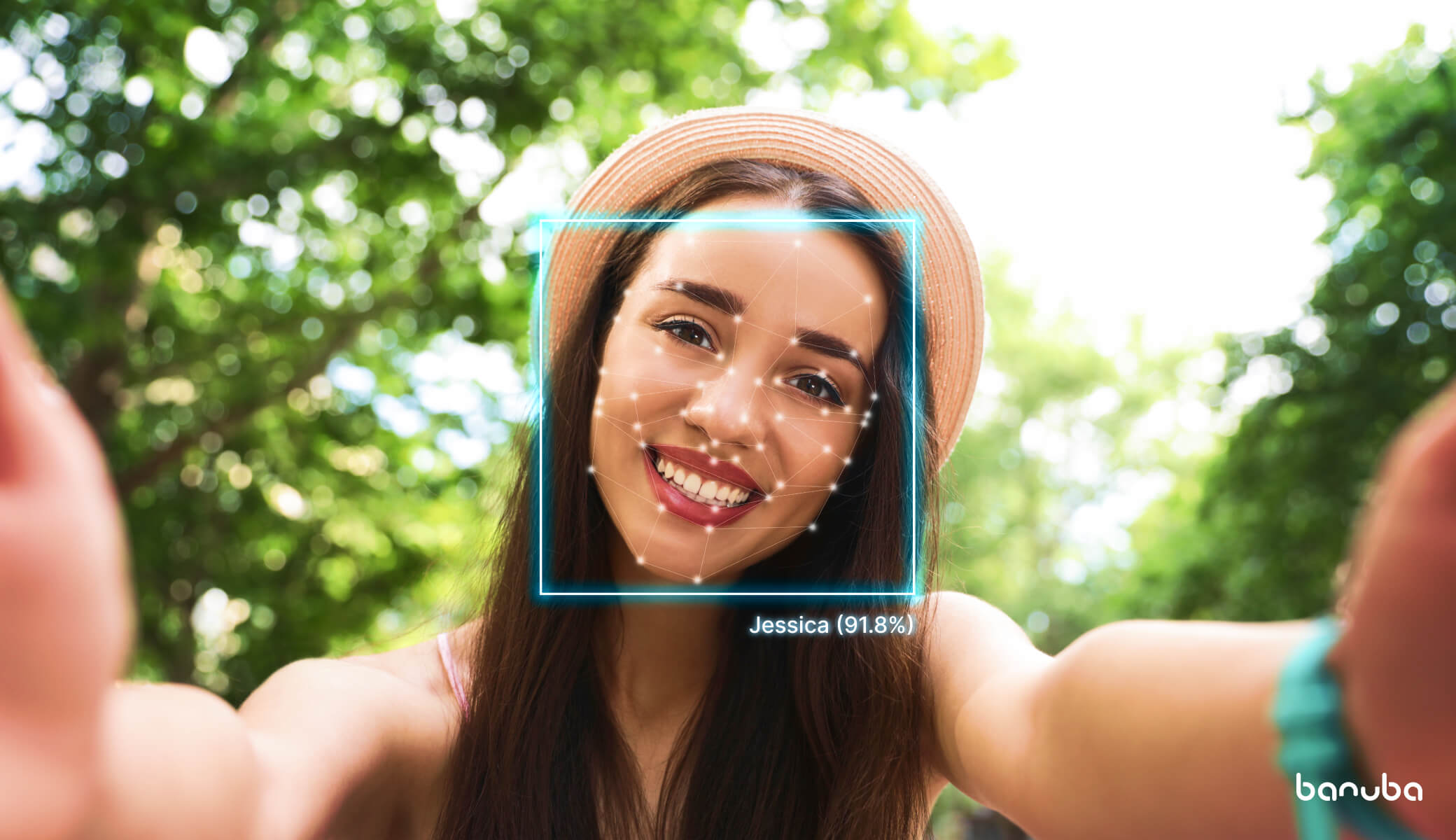

What is facial recognition SDK?

With face recognition SDK, the algorithms capture a digital image of an individual’s face and compare it to images in a database of stored records to identify whose face it is.

Such SDKs are used to build biometrics and pass entry systems, security systems, surveillance and monitoring tools, fraud prevention to verify a person by an image or detect criminals.

What is facial tracking SDK?

Whereas facial recognition is the subject to security and networking solutions mainly, facial tracking finds its usage in a variety of solutions not aimed to care who you are.

Rather, the technology creates an emphatic camera that “understands” the human face and allows for different face modifications.

6 must-try Facial tracking SDK use cases include:

- 3d Avatar generation that visually matches with real user faces and copies their mimics in real-time

- 3D emojis that can be controlled with user facial expressions

- Augmented commerce (virtual try-on)

- Morphing (solutions to align two different faces/objects to produce an in-between image)

- Beautification (makeup try-ons, digital camera enhancement)

- Analytics (insights into user behavior, mood, or physical parameters like gender, age, ethnicity, or even skin condition)

To put it simply:

- All facial recognition SDKs use face detection and tracking.

- Not all facial tracking SDKs have a facial recognition feature.

Android Face Tracking: Benefits, Challenges, SDK Solutions

The technical specification of facial tracking SDKs often features such parameters as landmarks, FPS, angles, device, and platform support to name but a few.

How does each criterion influence the user experience? What does it mean in the context of mobile products? Let's dive deep.

Facial landmarks tracking

With on-camera face detection, the algorithms extract landmarks or its key facial features such as eyes, eyebrows, nose, mouth, chin, etc. The more landmarks are detected, the more accurate face modification functionality can be applied.

Most often, facial tracking SDKs support min 68 landmarks. In the context of mobile, 86 landmarks are enough to cover all important facial features and enable robust facial tracking.

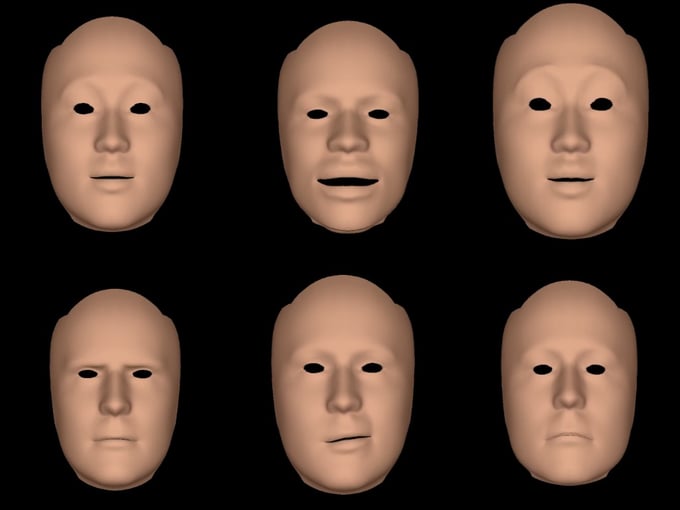

Banuba’s innovative no-landmark facial tracking SDK

Our face tracking SDK is not built on landmarks. Instead of landmarks, we recognize 37 face characteristics represented as morphs for the default face mesh.

Banuba's face mesh examples

Banuba's face mesh examples

These morphs include 30 parameters with facial expressions and anthropometry plus 7 parameters that describe the position of the face in a photo (head tilts and face angles).

What benefits does it provide?

- Fast performance. Since we don’t track landmarks but recognize only 37 face parameters, we achieve a much faster SDK performance and better accuracy. The algorithms need to process only 37 numbers saving the capacity resources.

- Patented anti-jitter. This saved capacity allowed for our patented anti-jitter technology being used to reduce jitter and achieve stable facial tracking SDK performance specifically on mobile.

- We run algorithms several times within one frame which shows the constant data (face) and the variable one (the noise). We adopt face detection algorithms to spot and process the noises within one frame due to which the SDK provides no lag.

Low-end device support

People are now holding to their old phones more often so, it’s important to take into account the performance of facial tracking SDK on low-end devices.

As of 2019, iPhone 6, iPhone 7, and Samsung Galaxy J5 are the most popular mobile phones in the UK, the USA, and a range of European countries. Xiaomi Redmi Note 4 and Samsung Galaxy J2 Prime keep the leading positions in India and Argentina respectively.

Most facial tracking SDKs run perfectly fine on iPhone 8 yet fail to work well on iPhone 5s or Android Nexus 6p. Or they don’t support them at all. It significantly limits the device's reach hence your target audience.

Our facial tracking SDK supports iPhone 5S and higher, which covers 95% of all iOS devices available on the market. For Android, we support Android 5.0 or higher with Camera 2 API and Open GL ES 3.0, which covers 75% of all Android devices.

FPS (frame per second)

When choosing the best face tracking SDK, one should understand the difference between the FPS of the camera and the FPS of the FRX (face recognition).

- FPS of the camera — the number of frames that a camera can stream or capture in a given second.

- FPS of FRX — the number of frames that the technology can process and deliver to GPU.

Case in point

The camera is able to deliver 30 FPS, but the FRX algorithms can deliver only 15 FPS. In the demo test, the camera may show 30 FPS, however, the user experience will be lagging as the technology won't keep up to process the camera's frame flow.

Besides, when it comes to mobile, most vendors forcibly lower the processor frequency under monotonous loads (as facial tracking) which leads to the loss of FPS.

The system understands that it's lagging and responds with the frequency increase. One second it gives 30 FPS, the next one — 15 FPS what results in wavelike technology efficiency.

Facial tracking SDK needs to ensure a stable FPS number in the course of time.

A low-end mobile device delivers an invariably lower number of FPS because of its limited computing capabilities. Stable 30 FPS is a good mark that provides lag-free facial tracking SDK on low-end devices. On high-end ones, it’ll be significantly higher.

Example: Banuba’s mask is fast and stable on iPhone 5s while the other mask trembles and lags.

Angles

If the user inclines or throws back his head, facial tracking may be lost. So does the functionality of the app built on top of it.

If facial tracking SDK supports wide angles, it can detect and modify a face even if the user is not looking straight at the camera. Wide angles provide a better user experience too. You can move, and check your angles, and the mask won't fall off.

The most powerful SDKs support ±90 degrees and full phone rotation.

Example: Banuba mask is lost at 80 degrees vs 70 degrees on the other one

Skin color

Another parameter to check is how face tracker SDK performs on people with different skin colors, i.e. with Afro-American and Asian skin types. The internet is brimming with examples of the technology blamed for racism.

But the answer here is the datasets based on which the algorithms were trained to recognize and modify faces.

The use of balanced datasets, those that include people's faces with different anthropometry (ages, genders, nationalities) allows for achieving the quality performance of face modification technologies, e.g. face masks.

Example: Banuba’s mask is faster compared to the other one.

Lighting

Unless trained to detect faces at low light, the algorithms fail to identify the proper face boundaries if lighting decreases and mix up the face with the background.

There are several ways to approach this either with the camera light switch on — the technology lights the face to ensure face tracker performance. Or it can set ‘low lighting detection” mode so that algorithms wouldn't waste the phone's power but rather waited for the proper lighting.

However, the best way is to have the face tracker SDK algorithms trained to detect faces in low light. With this, the phone can capture and track the face even if the human eye can hardly find it.

Occlusion

If the user covers the face with a scarf, glasses, hand or other occlusions, facial tracking might be lost. Most face tracker SDKs support occlusion to some extent.

Example: Banuba’s mask is accurate with glasses-occlusion while the other one is not.

Multi-face

In desktop or outdoor video surveillance systems, the algorithms can track many faces at a time without the loss of efficiency.

On mobile the most optimal number of faces tracked simultaneously without compromising on the quality of performance is 3-4 max.

How an Android Face Tracking SDK Makes a Difference

Mobile devices have to handle a range of factors that influence face trackers. Among such:

- the motion of the camera and shakes

- environmental factors (low lighting, flare spots, dirty camera)

- poor resolution capabilities of the mobile cameras compared to the security ones

- limited computing capabilities of mobile devices

- battery drain and limited memory

- extensive range of mobile phones with different camera’s Field Of View for each platform, especially Android

Besides, you need to take into account the short-term operation mode typical for most mobile solutions. Users open the app, have it working for a couple of minutes and then close it.

With the long-term mode, the operating system can forcibly reduce the system speed to save the battery drain and protect the device from overheating. In the same way, dynamic frequency scaling or CPU throttling is commonly used in computers to automatically slow down the system when possible to use less energy and save battery.

However, real-time video processing on mobile requires significant computing power. If the system reduces the clock frequency, the user experience suffers too.

The solution? Non-stop technology optimization.

Engineers are trying to find the golden mean between what’s available and what’s needed both for algorithms and devices to optimize the facial tracking SDK performance specifically on mobile.

From getting neural networks trained to detect a face in different conditions to tweaking the technology’s “under-the-hood”, face tracker optimization for mobile should be considered as a range of measures rather than a one-time endeavor.

Summing up

The most significant criteria for facial tracking SDK for Android and iOS are as follows:

- Device support

- Stable FPS or lag-free performance

- Wide angles supported

- Skin color and occlusion

- Lighting optimization

The other important criteria when choosing the best face tracker include the battery drain, SDK size, and device coverage.